A New Beginning

Posted: July 30, 2013 Filed under: news, predictions, tech | Tags: 7, apple, iCloud, ios, iphone, ui, UX Leave a commentSo, Apple has unveiled iOS 7 to much discussion, hand-wringing, and cheers. There are lots of things that I feel that Apple is promising to do right with this release, and a number of things that we will, of course, need to see to believe.

Photos

One of the most prevalent activities that iOS users engage in is photo sharing. Apple’s recently-released “every day” video showcases the power of the iPhone as a camera. iPhone users know that their iPhone is probably one of the best cameras they’ve owned, and the millions of pictures snapped daily obviously underscores that.

I was intrigued by the keynote’s handling of photos, namely the application of filters and the introduction of “Shared Photo Streams” into iCloud. To be honest, I felt that this was a feature sorely missing from iOS and iCloud for a long time. The idea of a photo stream curated by a single person is fine if one of your friends happens to be a professional photographer or something like that, but most situations in which people are snapping photos tend to be social, with multiple people desiring to both view and (most likely) contribute to an album of the event. The trick lies in determining the canonical center of the stream. Who “owns” the photo stream? When people contribute to the photo stream, are they adding to a single user’s photos, or are they, in effect, “copying” that stream to their own photo collection and then adding to it, which can then be seen by other parties? Or, are the photos stored on Apple’s servers, where multiple parties “own” photos, can add to the stream, and then define who else “owns” those photos? “Own”, here, is an operative word, since ownership of the photos is tough to nail down, in this case.

I’ve always wondered how something like this would work, but it’s a problem that Apple absolutely has to tackle in order to stay relevant. As people add more and more photos of their lives to their devices, the related storage of said photos become of paramount importance, followed closely by how people identify and integrate those photos into their identity. What has become increasingly obvious is that people don’t just craft an identity that is tied to a mobile device, they create a digital identity that the mobile device allows them to access. In order for these technologies to be relevant, they have to allow people to share photos and feel comfortable about storing them in a way that is non-destructive and still allows them to reference past events with ease. It’s clear that Apple is now moving towards more meaningful photo sharing, but it has yet to be seen if they can take this idea and use it to deliver the type of interconnectivity that people implicitly ask for.

Inter-app Data

One of the things that Apple did not address, and something I’ve heard from people who have recently switched away from iOS as their primary mobile platform, is that iOS hamstrings users by not allowing them to easily pass data between apps. While I agree with some parts of this argument, I can see Apple’s stance on the idea of inter-app data sharing. The scenario that I often hear from heavy Android users is that things like taking notes, or saving PDFs from one app to another, etc. are easier on Android. I don’t agree with this because I do those very same things every single day with iOS and, ever since Apple started allowing for custom URLs to pass data from one app to another, have never had an issue with that. As such, I think I understand their stance – that Android allows a freer exchange of data between apps using a more-or-less centralized file system.

One thing that we saw in the WWDC keynote, however, is the introduction of a new tagging feature in Mac OS 10.9, which, I believe, is going to be Apple’s eventual answer to the file system. Instead of files being stored on the device, in a folder, they’ll be stored in iCloud, accessible as clusters of files related to a specific idea. This is finally the intelligent organization that Palm’s WebOS got right. Ultimately, people don’t really organize their data by app, they organize it by idea or topic, which is a far cry from having data “live” in an app.

I think the ultimate goal is to enable a user to cluster files together around a central theme or project that they may be working on, and make that cluster available as an item in an app that keeps track of and syncs tags across platforms. Ostensibly, the user could open the app, see all of their tag groups, and (possibly using an Photos app-like pinch to spread gesture) see all of the files in that tag group. Tapping on a file would open a list of corresponding apps that are capable of handling that type of file. Interestingly enough, this may also allow Apple to put a little more control in the user’s hands by allowing the user to pick which app would be the default handler of that file type. In this manner, people don’t necessarily have to know where to look for their files, they need only to open the “Tags” app, find the group they want to work on, and tap the file they want to work on in that group. The OS then passes that file to whatever helper application the user has selected as default, and they’re off to the races. A system like this wouldn’t be able to satisfy every Android lover’s desire for a true file system, but Apple wouldn’t need to – the average user would see this as a new feature, and customers on the fence may see this as a tipping point.

Multi-tasking

This one is weird to me, but I like the way that Apple has addresed it in the update, with the WebOS-style “cards” interface informing this component of the OS heavily. The ability to see live updates of each app, or at least the current status of each open application as the user left it is another way Apple brings parity with Android, but does it better. I’ve seen Android’s task-switching waterfall, and It has always felt too sterile to be enjoyable to use, although I believe that’s more of a fault of the OS design language as a whole than that specific part of the interface.

The Icons

There have been a not insignificant number of words spoken about the changes Apple has made to the look of the stock app icons in iOS 7. To be honest, I feel like this whole discussion is completely moot. App icons are incredibly important, to be sure – they are the way a user identifies your application in the sea of other apps on their phone – but they are somewhat arbitrary. They need to be well-designed, but there is a certain “minimum effective dose” that allows most people to identify the app they’re looking for and associate it with the task they’re looking to accomplish.

When Apple made the choice to redesign the stock app icons, the folks behind Apple’s design choices exposed their design process as well as the grid-based layout sytem that informed the icon designs. There were comments made by graphic designers about how Apple’s layout choices were half-baked or wrong, and other coments that discussed how the color choices were catering to a younger generation, or the aesthetic biases of the cultures in new and emerging markets. Regardless of the reason behind the choices, I can’t help but relegate all of this commentary to the trash heap for the same simple reason: all of these comments are about a subjective experience. Of course Jony Ive wants to create an experience that is beautiful, familiar, approachable, friendly, and functional…but there are so many ways to accomplish this, and all of the commentary comes from a single data point in the universe. Even assuming that all of these designers and amateur critics were able to ascertain some objective truth about these designs that was universally applicable, they all have differing opinions – some of them conflicting – and it must thus follow that they’re either all right, or all wrong. I’m clearly in the latter camp. People are going to take a look at the icons and freak out because they’re different, and then everything will go back to normal and everything will be fine because, in truth, app icons only matter as pointers to something a user wants to accomplish. Once users draw new associations in their minds, they’ll be fine.

The Little Things

There are, of course, things that Apple hasn’t mentioned or brought up, most likely because they simply didn’t have enough time to do so, but I feel like I should mention them here for the sake of completeness.

While I know that not all of these things will be addressed (or even should be addressed) because of the focus that Apple is trying to maintain with iOS, there are some things that venture into that grey area that exists between the worlds of Mac OS and iOS. The first of these is the way the OS (and many apps) handle external keyboards. Safari, for instance, is able to handle a “Tab” keystroke, but does not recognize Command+L to put the cursor in the address bar, or Command+W to close an open tab. These aren’t necessarily “shortcomings” of the OS, but nor do they enhance the user experience. I’ve never thought to myself “Boy, am I sure glad they left out those keystrokes! My life is so much easier!” With this type of behavior, I’m not sure if the omission is intentional or not. Apple is a very intentional company, but something like this feels like an oversight as opposed to a deliberate design decision.

What’s Next?

Naturally, when people see new OS announcements from Apple, they assume that new hardware is going to follow closely behind. Something that I heard recently was that Apple’s new design, while beautiful on all current iOS devices, absolutely sings and looks right at home on the new devices that Apple has lined up for the fall. What these devices are is anyone’s guess, but I don’t think anyone would lose betting on a new iPhone. New iPad minis, iPads, and possibly iPod Touch units may also be in the works, but it isn’t completely clear yet exactly how these things will take shape, and what sort of changes we can expect. I love looking forward, but I don’t “do” rumors, so I’m not going to waste any time on speculating about what Apple is working on.

Ultimately, the new iOS version that Apple has introduced to the the world looks great and, based on what I’ve heard, feels amazing. I have no desire to start ripping on an OS that’s in beta, nor do I have the desire to laud it. While it’s exciting to see a refresh to the world’s most important mobile OS, the proof will be in the pudding once it’s been finalized and released.

No Good Deed Goes Unpunished

Posted: October 25, 2012 Filed under: explanations | Tags: apple, china, foxconn, iphone, manufacturing Leave a commentI’ve been reading a significant amount of backlash agains the iPad mini event focusing specifically on the lamentable lack of the “one more thing” moments of old. The typical banter has something to do with leaks coming from places that Apple has a hard time monitoring (China), and that it does everything it can to keep things hush hush in a world in which money talks, and loudly. My main point of contention with this sentiment is that it implies that Apple can’t keep anymore secrets about its new products.

I think that’s a silly idea.

Consider, for a moment, the scale of manufacturing that has to be brought to bear in order to manufacture products for Apple on the scale we are currently seeing. It has to be massive, and requires the coordinated efforts of millions of people, literally. From product inception, design, fabrication, and manufacture, there are literally millions of people involved, taking care of everything from the actual design and sourcing of raw materials to the shipping to your doorstep. Truth be told, their job isn’t even over when you have the product in your hands; they still have to support it and continue developing new software. The human life energy devoted to the manufacture and support of a single iPad is immense.

As such, consider the original iPhone, first introduced in January of 2007, but released in June of the same year. That’s a 6-month gap from introduction to purchase. In contrast, iPhone 5 was revealed on September 12, went on pre-sale two days later, and was available for retail purchase one full week after the introduction, on September 19th. The full implication of that is that Apple’s manufacturing machine has to be at work for months before the device truly sees the light of day. In short, more human beings (see above) are aware the device exists for more time before the general public can purchase the device.

With the original iPhone, Apple had the luxury of producing prototypes and testing them in relative seclusion. Apple no longer has that luxury because it works on some of the tightest schedules a person can conceive of.

Think about it; If Apple wanted to prototype a totally new product using in-house fabrication today, they could do it. They could show a working device to a room full of awed spectators who had no idea that such a thing existed, but they wouldn’t be able to put it in your hands until months later, and that isn’t something that Apple wants to do–they want you to make a decision and strike while the iron is hot.

So when you’re done watching the reveal of a new Apple products from another Apple device that’s barely a month old, remember that things weren’t always this way. You can’t manufacture your cake and be surprised by it, too.

Things Left Unsaid

Posted: August 25, 2012 Filed under: predictions | Tags: cloud, ios, iphone, ipod, iTunes, nano, siri Leave a commentWhile my posts haven’t been coming fast and furious lately, I’ve been watching the tech landscape recently and have seen some interesting shifts in where I believe a lot of things are heading.

Whither the iPod Nano?

This has been a perennial issue for me. When the iPhone 4S (aka the iPhone 5), was released, people did two things:

-

1. Thought that it was an inferior phone because the character “5” was not in the title

2. Forgot about everything else for a little while.

I, however, did not forget about the iPod nano. Conversely, I began to think more about it, mostly from the perspective of “How can Apple make use of this new Bluetooth 4.0 thing?” While Bluetooth may not be very important to many people in the world, or may be synonymous with “headset”, Bluetooth information exchange technology makes possible a great many things that people basically don’t take advantage of. Case and point, a friend of mine just saw me typing this blog post on a wireless Bluetooth keyboard and said “Wow, a wireless keyboard? I didn’t even know they made those.” Naturally, he’s a little behind the times (friar, vow of poverty), but that doesn’t stop the concept from being foreign to many people. An iPad-toting client of mine didn’t know that Bluetooth could be used to connect an iPad to a wireless keyboard, either (see “headset” equivocation above).

At any rate, that’s where we’re at. Bluetooth having effectively been relegated to another name for “headset”

The iPod Nano has the opportunity to become something so far beyond what it is right now. It can be a gateway to the information stored on an iPhone, a supplement to an iPad (remote control, keyfob, microphone, etc.), and, possibly even more importantly, a front-end for Siri. Naturally, the iPod Nano’s screen isn’t designed for displaying large amounts of information, but that doesn’t preclude it from being an information portal.

Smaller is…Smaller

When talk of an “iPad Mini” started swirling about, I immediately started thinking about the whole Steve Jobs “people don’t like these ‘tweener’ sizes for tablets” statement. Whenever he says that, you know that a product isn’t too very far away. The issue for Apple wasn’t creating a product in that size, but rather timing their entry into that size category. One of the things that I’ve noticed about a great deal of the other 7″ (ish) tablets on the market is that they lack anything truly compelling for me. I wouldn’t want a Kindle/Kindle Fire because its primary purpose is to read books purchased through Amazon.

The Nexus 7 was almost enough to get me on board until I used one. “Why would I spend any money on this?” I found myself asking over and over. The only truly compelling thing that I saw in the Nexus 7 was the NFC capability, but even that was a stretch. I need a product like that to be an iPad, but smaller, capable of all the things my iPad is capable of. I’m sure there are many people in the same boat.

I’ve been using the iPad to take notes, draw, read, and write since its introduction to the market. People tried to tell me that it wouldn’t be capable of much, and I would just quietly continue working, nodding as I continued to accomplish goals I set out for myself from the comfort of a tablet that I could use comfortably all day.

I knew there was one problem, though: it was too big (and not by much) for me to carry in my hoodie pocket. There were times that I only wanted to carry my tablet with me and nothing else, lack of charging equipment and extra tubes for my bike being reasonable things to forego in favor of a tablet that could slip easily into my back pocket. My iPad was literally a half inch too big, and I resigned myself to carrying the things I needed in addition to my wundertablet.

It was a hard life, I know, but I made it through. Thanks for your concern

Now, however, I feel like Apple is going to make a lot of people happy by creating a device that is perfectly capable of an absolutely ludicrous number of things (vis a vis other tablets), yet still has an extremely portable form factor (as though the iPad wasn’t portable enough).

Here’s the thing, though: Apple needed to time this whole thing. Releasing a 7″ (ish) tablet shortly after the iPad would have been great, and people would have really liked it, sure, but it wouldn’t have had the same impact that I believe it will have now. By releasing an “iPad Mini” now, Apple has allowed all the trash to sift itself out. Plenty of other companies have brought “me too” devices to market, and each has captured some small part of the iPad experience that people love, but left even more behind. Other companies thought that, if they could only have gotten that 7″ tablet to market first, that they would have ruled that space. The issue with that type of thinking is that it leads to sloppiness. Should this “iPad Mini” be released soon, it will be released with the entire weight of Apple behind it. It will have access to the iTunes store, it will have access to the App Store. All the apps that people have already purchased will be available on their device from day one. Their contacts and calendars will be synced through iCloud, and, while the same can be said for any Android tablet in that form factor, a person toting both Android and Apple devices would have to manage two devices with two different stores to shop from, two places to store their media, and no convenient way to slosh purchases around between devices.

With a device having a smaller screen size and profile, Apple will be making their signature store/device integration available in an even more portable form factor. The market will respond, and it will respond favorably.

Keep Your Friends Close

The last thing that I haven’t been hearing much about recently is NFC. Samsung released the Galaxy S III to a mediocre amount of fanfare, touting all of this NFC magic…but I have yet to see anything really interesting come out of it. I love the idea of NFC, but, like the Nexus 7, I see no one using it. I don’t see any stores with NFC tags on their doors, no restaurants with NFC tags on the tables to allow patrons to silence their phones and join their wifi with a single tap. None of this is real because I have a sneaking suspicion that Samsung has no idea what it’s doing. They put products on the market that have checkboxes in all the right places, but no real-world application of any of the things that those boxes relate to. Great job, Sammie, your phone has NFC! Does that honestly play a role in most people’s buying decisions? No, no it doesn’t. A friend of mine recently purchased a new GSIII and, when asked about the NFC feature, had no idea what I was talking about.

Truth be told, I’m not sure NFC will ever be a truly compelling technology, but I believe that, if it is, that Apple will do it right. They’ll do it right because they’re really the only company that can make something as obscure as NFC relevant enough to matter to the world. When the world’s most valuable company throws its weight behind something, you’re pretty safe betting that people are going to pay attention.

Now What?

All of this assumes a few things

1. Apple is releasing a new iPod Nano.

2. Apple is releasing an “iPad Mini”.

3. The aforementioned products, in addition to the new iPhone, will contain NFC technology.

Those are a great deal of assumptions, but they all seem to make sense. I’m not one to start making assumptions and thinking that I’ve got it all right, but, based on what I’ve been seeing and, perhaps even more importantly, what I haven’t been seeing, I believe that all of these things are very close to reality.

I haven’t even touched on the possible integration with a refresh of the Apple TV, but I think that all those things are around the corner, as well.

It’s gonna be a helluva September

The Apple Nexus

Posted: January 2, 2012 Filed under: innovations, life integration, predictions | Tags: apple, connection, ios, ipad, iphone, NFC, patent, tv Leave a commentI’ve reading a great deal in the past few months about all of the new Nexus phones that have been coming out recently, reviews by people who have used iPhones and tried to switch but failed, reviews by people who are avid Android users who love them, and most people who are somewhere in between. I’ve heard arguments as to why certain operating systems have more future, certain phones are objectively better, and really just stand somewhere in the middle, looking at all of this with a little bit of a quizzical look on my face. I’m not trying to take sides here, but I believe that Apple’s position in this market is much better because of one main reason: NFC.

While it’s true that Google’s Nexus phones have had NFC built-in for some time, it has been clear that the feature has been little more than a bullet point in a presentation in order to build some buzz and give Android pundits something to hold over Apple’s head. I thought the inclusion of NFC in the first round of Nexus phones to be half-baked, mostly because I looked around at the places I visited every single day and saw literally nothing that used NFC in a way that was available for public interaction. The only usage for NFC that I’ve seen implemented anywhere was in the TouchPad. We all know how that went.

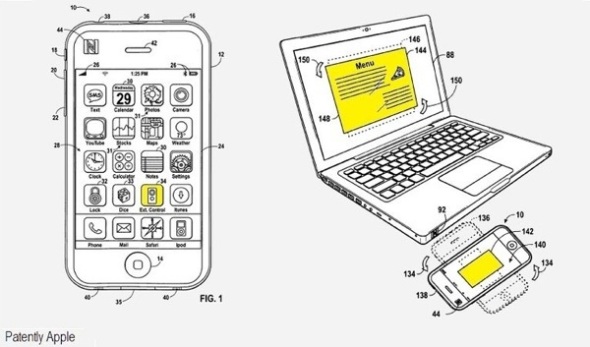

The key here is this.

If users wave a NFC-equipped iPhone at a NFC Mac (they need to be in close proximity to interact), the Mac will load all their applications, settings and data. It will be as though they are sitting at their own machine at home or work. When the user leaves, and the NFC-equipped iPhone is out of range, the host machine returns to its previous state.

This is huge, and with Bluetooth coming back in a big (or perhaps little, as in low-power) way, this may be even more effective.

“The usual idea is that you would use NFC to set up the link between the two devices and then do an automatic hand over to a different protocol for doing the actual transfer of data – eg Bluetooth, Wi-Fi, TransferJet etc – and that’s what I imagine would be happening here,” she said.

The above coming from Analyst Sarah Clark of SJB Research.

This idea still has so much potential. As Steve Jobs said when he unveiled iCloud, Apple is demoting the computer to just another device, one that accesses your data in its servers in North Carolina somewhere. With the computer being just a gateway to your computing state anywhere, any device can also theoretically access this saved state and allow the user to resume their previous session wherever they are.

Let’s also look at another piece to the puzzle: Apple TV. We don’t know what Apple is planning for this theoretical Apple TV later this year, but let’s take a look at the Apple TV in its current incarnation, the tiny little black box that, quite frankly, is a little Wünderdevice.

For starters, you can now do this. I think that’s a pretty big deal. So the Apple TV, in its current state, can run iOS apps. It can access iCloud. It can play music and movies, and also allows a compatible device to mirror its display through a Wi-Fi connection. Let’s talk about that for a moment, as well.

If you haven’t already, check out Gameloft’s Modern Combat 3. It’s basically a Modern Warfare clone, but it has one killer feature: the ability to mirror the game on an Apple TV, which turns the iOS device you’re holding into a controller and puts the game on the big screen. I tried this on my iPad and was amazed with the results. This is truly something that game developers need to be looking at, but it’s also something that regular developers need to be looking at, as well. Think about it–if a device that is mirroring its display output to an Apple TV can display different content on the device than on the TV, a word processing app could essentially turn the tablet into a wireless keyboard, while the main workspace is displayed on the TV. The iPad or iPhone (or both!) could display a suite of controls or “function” keys, or function as pointing devices, or really anything that you can think of. The idea of a “technology appliance” holds even more water here, since these devices can be used synergistically to create an effect that one device on its own is technically capable of, but is better when spread out among several devices. Look at Keynote, for instance. With an iPad and iPhone, a person can run an entire professional presentation with no bulky equipment and a minimum of technical prowess.

In the context of the aforementioned connection to an Apple TV, this capability becomes even more important, since it allows the TV to function like a traditional “desktop”, but without the bulk of wiring, an extra device to draw power, and connections to set up. NFC handles everything, and the bulk of the transfer can then take place over Wi-Fi, Bluetooth, or some other protocol that is standard in Apple devices.

And this, my friends, is why Apple is positioned so much more powerfully in this market than any Android device manufacturer. While other manufacturers will essentially be playing catch up with all of this anyway, they will also have to contend with consumers who will be presented with each manufacturer’s take on this idea. Where Samsung may offer one type of connectivity, Asus may not, since it doesn’t have a TV of its own, but LG might. The consumer will stand in front of his TV and scratch his head wondering why his Motorola Xoom isn’t connecting to his Samsung TV, while his neighbor with an iPad and Apple TV is able to transition from room to room in his house without missing a beat.

The aftermath of this whole shebang would be the equivalent of a Destruction Derby, with all of these companies vying for the consumer dollar, blowing themselves to bits and waging a war of attrition while Apple’s devices still lead the way due to their simplicity and interoperability. The next thing that will happen is that these other manufacturers will start listing even more specs on their TVs, things like gigs of ram, processor speeds, and core counts. The consumer will look at all this and once again scratch his or her head in confusion. The Apple TV will say something like “Best-in-Class Picture Quality, Siri, and [catchy Apple-fied name for NFC connections]. Say Hello to Apple TV.”

It’ll sell like gangbusters, and we’re all going to want one. Of course we will, it’s going to represent the future of computing. Can we even call it that anymore? No, not really, it doesn’t feel right, and in this one (admittedly long-shot) future, “computing” isn’t a thing. You just pick what you want or need to do, and you use well-designed, simple hardware to do it.

The Revolution Will Be…Worn?

Posted: December 29, 2011 Filed under: ideas, innovations, life integration, predictions | Tags: 4.0, bluetooth, ios, ipad, iphone, low-power, PAN, siri, ubiquitous Leave a commentLooking at the state of mobile technology today, it’s clear that the tablet form factor is the flavor of the week. A decade ago, however, the future of mobility looked a lot less like a clipboard and a lot more like a wristwatch.

For years, people were focused on wearing their computers. What is a thin, rectangular window to endless content now was a wrist-mounted portal to information then. The problem that designers always ended up getting stuck on, however, was the interface.

Designers tried to tackle this in a wearable computer concept, but the end result is still a mashup of the ideas of the last few decades and the fancy swirly graphics of today. The input method in the aforementioned concept (a swing-out keyboard? really?) is kludgy, at best, and the whole thing looks, well…huge. Would anyone actually wear that? No, no they wouldn’t because that sort of thing is a fashion nightmare.

Then there’s this one. Ouch. Really? I mean, sure this is military technology, so we’re not looking for haute couture here, but…I mean…really? This just won’t do.

The problem is that the input method for all of these concepts still involves directly interacting with the device, touching buttons, or tapping the screen with a tiny stylus. All of these options are unacceptable when it comes to wearable computing. A person cannot have devices oozing out of every pore and orifice just to get at a Wikipedia article. What they need is a device that is intuitive and simple, something that “just works”.

This is where it gets difficult.

Apple has already developed a powerful, revolutionary computing interface powered by speech. They call it Siri, and I’m sure that most people are familiar with it at this point. If not, the link should tell you everything you need to know. The bottom line is that it’s intuitive, and allows a person to perform almost every single task they usually need a computer to do with little else than a functional set of vocal cords. This powerful computing interface, however, requires a persistent connection to the internet to be able to send your voice to Siri, and to receive Siri’s reply. Furthermore, access to Siri’s beautiful mind is limited solely to owners of Apple’s iPhone 4S, at the moment.

Here’s where it gets interesting.

Apple designs hardware. They also design software and build empires on their intuitive, simple interfaces. Siri is about as simple as you can get, but not everyone has the ability to talk to Siri, and there may be those who simply don’t want to purchase a new phone for the privilege. What if, however, access to Siri could be granted by wearing a watch? Apple’s design team could surely design a beautiful watch. What if this watch was actually a computer, however? Or, perhaps not a computer, but rather a gateway to this magical, intuitive, almost infinitely powerful computer? Follow me, child, the path to this potential future is an interesting one.

Apple has been doing a lot of work behind the scenes, as it usually does. It’s been chugging away at the internal components of the iPhone 4S, upgrading a little-loved part of the phone that may actually end up being the key to this whole new ecosystem that Apple has developed: Bluetooth 4.0. The main thing about the new Bluetooth 4.0 specification is that it allows for a very low-power state, which keeps certain communication avenues open while allowing others to close. This versatility means that a wrist-mounted “computer” doesn’t actually need to do any processing of its own, but requires a connection to a device that can. Furthermore, while previous iPhone models may not sport the swanky new Bluetooth 4.0-compatible chips, they can still perform admirably with normal Bluetooth connections. This opens up the possibility for previous iPhone models to access Siri through a special piece of hardware that piggybacks off of the existing iPhone data connection through Bluetooth in much the same manner a headset would.

The end result is that a person will be able to talk to Siri, but do so without any sort of visual feedback. Ultimately, this is the sort of interaction that Apple is going for anyway. The device doesn’t need a screen (but may have one like the iPod Nano) because the interface is completely invisible. Much like the iPod Shuffle’s tiny form factor that can still communicate with the user, the new “wearable computer” does not have to be anything more than a gateway. The magic of the iPod Shuffle is that it feels like it’s so much bigger. The power of the new wearable computer is not that it is super fast and spec’ed to the gills. The power is that it feels like the world is no more than a question away.

Dick Tracy would be jealous.

Fun With Numbers

Posted: October 5, 2011 Filed under: news, thoughts | Tags: 4S, 5, A5, announcement, camera, cpu, dual-core, future, ios, iphone, plans Leave a commentApple announced the iPhone 5 4S yesterday, much to the chagrin of the internet. Well…perhaps not to the chagrin of the internet, but everyone was expecting something called the “iPhone 5”, and Apple announced an absolutely amazing piece of kit they’re calling the “iPhone 4S”.

There was a lot of backlash, from what I understand, which seems…silly? I think that’s probably the best word to use right now. Silly.

See, the iPhone 5 was supposed to have all these amazing features, like a dual-core A5 processor, a higher-resolution camera, and image stabilization when shooting video. It was supposed to do all these amazing things with even better battery life, too. What a product! Yet, what we got was…the…wait let me check on this…we got the iPhone 4S…thing…with a dual-core A5 processor, higher-resolution camera, image stabilization, and something called “Siri”. Ok? But this silly piece of hardware is…well just look at it! It looks the same as the iPhone 4! And it’s called the iPhone “4S”. PEOPLE can you hear what I’m saying? It has a four in the name. Four is not five, my dearies. This is clearly a disappointment.

Let’s talk about what’s NOT in the iPhone 4S:

I think that about covers it.

Seriously, though, the next iPhone is revolutionary. Not because it looks like an iPod touch, but because it’s basically an iPad 2 in the palm of your hand.

I don’t think it’s time for a chassis redesign, and I’m glad they stuck with the iPhone 4’s slick glass and steel thing. There’s so much more in there, and all it will take for people to understand the beauty of the iPhone’s new guts is moseying down to their local crystal palace (aka Apple Store) and fiddling with the thing for five minutes, in which time they’ll realize that they can be twice as productive with this new pocket computer than they are with their current one. Game, set, and match.

This Isn’t a Thing

Posted: September 18, 2011 Filed under: rants | Tags: Android, facebook, google, iphone Leave a commentThis won’t work. I’m not saying that it never will, but I don’t believe that this is something that Apple’s framework actually even allows; Apple doesn’t allow this by design. The whole idea of a phone that does the “bidding” of another company, or simply becomes a platform for another company’s ideas, values, and way of thinking is absurd. Google might allow it because they’d find a way to monetize it, but can you imagine that? I mean actually take a minute to imagine a Google Ads-ridden Facebook interface shoehorned onto an Android phone running some forked version of the OS. Jesus, it hurts to even think about. What a horrible, mind-destroying user experience that would be.

Treading Lightly

Posted: August 16, 2011 Filed under: innovations, life integration, review | Tags: bags, bungee, cellular, chrome, Europe, inka, ios, ipad, iPad 2, iphone, keffiyeh, parker, pen, shemagh, shoes, travel, velcro, vibram, waterproof, wifi, zebra Leave a commentThe Travel Bug

Travel, whether it be to a neighboring state or outside our country’s borders, is an experience that many people crave. The problem is that travel is very stressful to many people, a feeling that is exacerbated by the sudden disruption in daily life that occurs when a person is “out of their element”. Little things, like the lack of cell phone service and non-ubiquitous internet, can compound the feelings of isolation that people sometimes experience. The “struggle” of travel, that is, the difficulty of moving from place to place with ease, can be compounded when baggage feels too heavy or cumbersome, or when the physical well-being of one’s belongings becomes an issue.

There’s no easy way to squelch these feelings, but there are ways to diminish them so that they become more or less irrelevant. I had the good fortune to be away from my home for over a month recently, and was able to put my mobile lifestyle to the test of international travel. I’d like to share all of my successes and failures with you so that you can be better prepared for any potential trips you may have coming up.

Getting there

One of the hardest things that any traveler will have to deal with is transportation. The stress associated with traveling—the documents, times, schedules, and logistics involved with shuffling belongings around—can be overwhelming at times. The simple solution, for me, was simply to know myself really well, and know how I handle things like documents, money, and tickets.

Wallets

Big Skinny

For those of you who are used to carrying around a fat wallet, this section will probably be pretty easy for you, since you’re used to having something juicy in your pocket. I’m used to a wallet made by “The Big Skinny”, which is super-slim and almost nonexistent. For day-to-day use, it’s ideal, but that’s in the good ol’ U.S. of A. For international travel, it just didn’t quite fit the bill…or the bills didn’t quite fit. It’s a small thing, but other world currencies tend to be more square than our long rectangles. Despite the fact that my wallet really wasn’t designed for international travel, Big Skinny makes wallets that are, and I would highly recommend them due to their light weight and ruggedness. For extra protection against electronic/RFID theft, put a single sheet of aluminum foil in the outermost pocket—it’ll protect your passport and any RFID-enabled credit or debit cards.

Some folks like to travel with those under-shirt money/passport holders, but I hate those. Nothing irks me more than something pressing up against my skin while I walk through some crowded marketplace or beautiful scenery.

Why Is This Technology?

I listed these wallets here because I believe they’re among the best wallets produced today. They’re simple, lightweight, and can store as much as a traditional leather wallet at a fraction of the weight and size. They’re washable, durable, and easily carried, which means that they’re an improvement on the wallet as most people know it. Big Skinny took the design of a wallet and updated it to accommodate a more mobile lifestyle.

Bags

Chrome Berlin

Recently, I’ve taken up biking, and have also taken a liking to stuff made by Chrome. If you’re a biker, then you know what I’m talking about. Their bags are made to withstand direct hits from nuclear weapons, and come with a lifetime warranty. They’re expensive, I’ll give you that, but they’re amazing.

Some people will stop reading at this point and say, “Traveling abroad is completely different than biking through an urban setting,” and I will agree with them wholeheartedly. If you’re like me, however, then you want options for your travel, and the Berlin has just that. This thing has more space than you’ll need, guaranteed, and is still considered carry-on for flights. It also has the benefit of being tough as nails, waterproof, and padded.

Now, this is no backpack, it’s a messenger bag, and I’m recommending this over my usual go-to hiking backpack because of its versatility. If you’re checking into a hotel or hostel, you can pull some of the unnecessary stuff out of it to leave behind in the room and carry just what you need for the day—the bag has straps to pull it into a more compact profile. If you decide to go shopping one day and need to bring home all your swag, just let the straps out and you’ve got a house on your back. Done.

Incidentally, I was actually traveling through Berlin with this bag with a group of around 30 high school students, who decided that it would be a good idea to go to a club one night. I had space to hold 27 of their jackets, raincoats, and the like while they danced, in addition to some of the standard stuff I keep in there (iPad, charging cables, journal, pencil case with band-aids and medical tape, hat, bandanas, bungee cords, Leatherman Multi-tool, blanket). So…a lot of space. They’re also damn near impossible to steal from, since they’ve got so many straps, velcro, and buckles that someone attempting to open yours while it’s on you will most certainly get your attention. before they can grab anything.

Why Is This Technology?

I list this here because this bag was designed, tested, and produced by a company that knows mobility. They take pride in their materials, their craftsmanship, and the efficacy of their products. The bag is waterproof, incorporates a load-distribution system (by way of extra straps that can be tucked away when not in use), and has “hidden” compartments for things like blankets and hoodies that you may not need all the time, but are good to have around. This isn’t just any messenger bag—it’s the evolution of the messenger bag as interpreted by people who need to get around quickly and efficiently.

Swissgear Sling Bag

If you’re one of those people who would rather not have a large bag, swing by Target and pick up a Swiss Gear Sling Bag. If it’s out of stock, grab something similar, since they can pack flat and are lightweight. These can be great as a simple day pack, since they’re maneuverable and don’t get in the way of enjoying the moment.

Shoes

Vibram Fivefingers TREKSPORT

There are lots of opinions on shoes, so I’m just gonna throw mine into the ring. I haven’t discussed these shoes before because I was holding off on picking up a pair for a while. Spending a month walking around in them, however, has totally changed my mind. Trucking through Berlin, Disney World, and now Chicago was an eye-opening experience. I don’t think my other shoes will be seeing a whole lot of use now that I’ve got these bad boys.

The shoes in question are the Vibram Fivefingers TREKSPORT, and they’re amazing. I picked them up on clearance from REI, so they were cheap for me, around $80. I’d recommend looking around a little to find a pair discounted from regular retail, since these may not become your everyday shoes. Vibram has introduced several new models of the Fivefingers shoes, so look around to see what fits you best.

Berlin was a relatively damp experience for us. By “relatively damp”, I mean the whole lot of us were completely soaked for four straight days on account of rain. These shoes aren’t waterproof, so my feet were absolutely sopping wet for almost four days straight. Everyone else’s feet were, too, but since there’s just a scant few millimeters between your foot and the pavement, stepping in a puddle is one of those “instant feedback” situations, in that you’ll know right away. You won’t have to worry about cutting your feet on glass or rocks since the sole is quite tough, but you will have to worry about sloshing through street water. If your travels are going to take you through destinations with lots of water-borne diseases, you’ll have to look elsewhere for footwear. If you’re going to be moving through cities and uneven terrain, however, these shoes can handle everything.

A further plus is the fact that you can just toss these in the washing machine when you’re all done (cold water only). Just let them air dry afterwards and you’re back in business. Just remember to take a brush to the soles beforehand so you remove any clinging gunk and organic matter from the shoes so you don’t have really gross stuff floating around in your washing machine water with the rest of your clothes.

A potential downside is that these shoes attract attention. Everywhere I went with them, I’d hear people talking about them. Potential thieves will, of course, see them as well, and if you’re in a country where these shoes aren’t sold, or where the shoes are a relatively new item, you’ll be pegged as a traveling American from a mile away, so be careful.

Why Is This Technology?

I listed these shoes here because they’re designed by a company that knows shoe soles looking to move the idea of a shoe into the 21st century. They’re lightweight, durable, and healthier for your feet than normal shoes. One look at these shoes communicates forward-thinking design and a novel approach to bone and joint health.

Having Fun, Staying In Touch

Once you’ve actually gotten to where you need to go, it’s time to set up shop, so to speak. There are lots of ways to go about doing this, but I’ve found that there are some well-known gadgets that make this process a whole lot easier.

Gadgets

Here’s my forté. I’m gonna rip right through some of the tech I brought with me to illustrate how it was useful or not.

iPhone

As I thought before I left, I really didn’t like using the iPhone overseas, mostly because the one I was using was locked to AT&T and not useful abroad. Even if I wanted to, I couldn’t just pop in a foreign SIM card to make calls without first unlocking the device. Despite great advances in the simplicity and efficacy of that process, unlocking is still potentially dangerous, and most people won’t want to touch it. I didn’t, and I found my iPhone fairly useless. Sure, I could still use it like an iPod Touch when in range of a Wifi signal, but that didn’t cut it most days. I’m sure my experience would have been different if my iPhone was carrier unlocked, but purchasing an unlocked iPhone is prohibitively expensive right now. If the Apple rumors pan out, maybe Apple will introduce a carrier-agnostic handset that people can take with them anywhere in the world. Fingers crossed, right?

The one place where the iPhone performed famously was in photography. I’m no professional, but the pictures I was able to capture simply because I had my iPhone handy are nothing short of spectacular, in some cases, and the ability for the iPhone to take panoramic shots with the help of apps like Microsoft’s Photosynth give it a huge leg up over traditional point-and-shoot cameras. Other apps that produce different effects, like SlowShutter help capture more drama. Hipstamatic is really a no-brainer, but spend some time exploring the app and learning to use the various lens-film combinations, since that will really help you snap the right photo with the right mood when you need it. Hipstamatic is incredibly versatile, so don’t discount it as a fad or toy—I’ve seen and taken some beautiful shots with it.

iPad/iPad 2

An amazing piece of technology that has changed the face of the mobile landscape completely, and also incredibly versatile while traveling.

The camera on the iPad 2 isn’t the best, but is great for capturing video, so take advantage of that, but also be aware that pulling either the iPad or iPad 2 out in public makes you a target. It’s not something that you can just slip into your pocket like a regular camera or iPhone/iPod Touch, and if you use the Berlin bag that I suggested above, you can’t exactly get it into or out of the bag rapidly, either. Exercise caution if you’re going to be shooting video with it in a crowded environment. If you want to shoot video scope out places you can shoot video from that aren’t too exposed, or that have limited access points. Getting used to doing this is a valuable travel tip in general, so make it part of your day-to-day preparation.

The main reason I suggest the iPad is due to its ability to fluidly transition from country to country. Since the iPad is not SIM-locked, you can purchase a data-only SIM card online or through a local retailer to use during your travels. This makes the task of communication much, much easier, and if you use a service like Google Voice, you’ll be able to communicate with anyone in the world the same as if you had a local phone. Skype is also a possible alternative, but I find GV to be a little more versatile and easier to use. Skype, however, has the ability to send SMS messages to more countries than GV, so consider that when planning which service to load up on.

Without an internet plan, however, the iPad is still more than capable. Any Wifi Hotspot can become a gateway to the world, and with the iPad’s ability to store hundreds of songs, books, magazine articles, and the like (not to mention a handful of your favorite movies), it’s easy to see why this little device is the ideal traveling companion. When I needed information about where to go, I checked with several of the guides I had downloaded, and language was never really a problem with dictionaries pre-loaded. The Google Translate app is really great, but requires that you have an active internet connection to function, so I recommend it only if you already have some sort of cellular data connection (or easy and ubiquitous access to Wifi).

While Android-powered tablets and phones will have some of these capabilities as well, they’re not as well-integrated, in my experience. Using an Android-powered tablet without internet, for example, is incredibly difficult, and many of the apps that I was able to enjoy on my iPad (The New Yorker, National Geographic, and Wired, just to name a few) really don’t exist on Android. Purchasing and reading books from the iBooks Store is incredibly easy, and having hundreds of books in my library makes long train rides bearable.

Writing on the iPad is also a great experience, but those who need an external keyboard for longer typing sessions should probably explore their options. I found the Apple Wireless Keyboard, coupled with the Origami Workstation to be a great solution, but can gladly go without either if necessary. If you have an iPad 2, then a Smart Cover is absolutely necessary. Not only is it great for protection, but propping up the iPad for typing is a great feature. I also have a clear screen protector just to make sure the screen stays safe.

To protect the whole shebang, I have my iPad in a Targus Crave Slipcase that I also picked up at a steal for under $20. this isn’t necessary, but it adds to my peace of mind when packing my bag , since I know nothing’s going to crush my iPad.

Jawbone JAMBOX

This one was a bit unexpected, but has proven to be a total life-changer.

The JAMBOX is a portable speaker. That’s it. It’s a really good portable speaker, though, and it’s tiny, and wireless, and for those nights that have you exhausted and sitting in your room (soaked to the bone, in my case), it makes a huge difference when you can fire up some tunes that take you back home, or remind you of all the things that make life wonderful. It has an amazing battery life, and the sound quality, in my opinion, is phenomenal. It’s not a set of home theater speakers, and it’s not going to fill the streets with your beats when you need to do a quick breakdancing session to make some money, but it’ll fill the room with enough sound to get you there.

Optoma PK102/201/301

Another unexpected gem that you can pick up relatively cheaply now, depending on the model you’re interested in. I snagged then PK201 (an upgrade to the PK102) for my travels, but you may want the PK301 for the extra brightness. The PK201 and PK301 are capable of displaying the same resolution, however (720p).

I doubt you’re going to need this one on your travels, but if you’re moving from country to country with friends, sometimes it’s nice to have the ability to have an impromptu movie-watching session. The PK201 plays nicely with the iPad 2’s new HD video mirroring, and can also display HD video at really huge screen sizes. You will need a relatively dark room, however, since the PK201 isn’t exactly a spotlight. For travel, though, the PK201 does fantastically.

I used this projector primarily to display supplemental material on the wall during a three day retreat at an old German castle. There is a little bit of “wow” factor in this one, but it’s not too much to be distracting. If you’re the kind of person who likes a little bigger image to work off of, or if you need to get your content displayed for lots of people to see it, take a look at this one (or its big brother, the PK301). Also, coupled with the JAMBOX and an iOS device, the PK201 creates a nice little portable theater with decent sound and fairly good picture. It’s the little things, right?

ZaggSPARQ

Naturally, having the right adaptors for the job is of huge importance, and knowing the relationship between voltage and amperage can save you a costly trip to an electronics store.

One of the benefits of traveling around with an iOS device is knowing that the chargers work on 100-120 V current as well as the 200-240 V current present in many other countries. As long as you have the proper adaptor for the outlet, you won’t need to lug around an expensive transformer. Finding a free outlet, however, is another story, one that is easily solved by a device that has multiple USB slots, or one that splits the outlet. I opted for the former by using the ZaggSPARQ.

I think the ZaggSPARQ is the unsung hero of a mobile lifestyle, even more so on long overseas trips. The ZaggSPARQ, in addition to being a charger for multiple USB-powered devices, is also a portable power source all its own. It has a built-in 6,000 mAH, lithium-ion battery, which means that it can recharge your iPhone almost four times (real-world usage is probably 2-3 times), or get your iPad up to around 60% from 0% (real-world usage is closer to 55%). For anyone who’s ever been stuck without power for a while, this is HUGE. Thankfully, I was never in that situation, but the ZaggSPARQ is a powerful (haha…ha…) ally when it comes to being mobile in a foreign country. You won’t always have access to outlets, or may have to share them with people when you do, so having a way to keep your devices juiced up is pretty clutch. The downside is that, especially with all of the gadgets I’ve mentioned, this one brick won’t have enough power. You may want to get two, or try to find a power supply with a larger capacity (10,000 mAH or higher, if possible).

All the Rest

This section is for the little things that don’t really fit anywhere else. Some of these things will seem like common sense, some will be familiar to frequent travelers, and some may simply be a new take on an old standby.

Multi-tool

The TSA bans flying with most multi-tools, mostly because they contain some sort of knife of cutting implement. There are, however, multi-tools that the TSA does allow, but the TSA folks you encounter will most likely be ignorant of their own rules and as you to discard it. That being said, if you’re checking luggage, just put it in your checked luggage bag. If you’re not checking anything, but are traveling with someone who is, ask if you can stash it in their luggage for the flight. If you’re traveling alone, try purchasing a tool under seven inches and without any sort of cutting implements or awl/icepick/punch tools. If you can’t get a multi-tool without these things, consider sacrificing a tool and just file off the offending portions.

Just remember that even if you take all these steps, the TSA folks may still stop you and toss your stuff.

Why Is This Technology?

Tools have evolved over thousands of years into the forms that we know and recognize today, but are still made of materials that are heavy and difficult to transport. By re-imagining common tools, designing and engineering them to fit into more compact forms, and producing them with more advanced metals, people have taken age-old ideas and made them more portable without sacrificing strength or durability. Having a simple set of tools available in a compact form means that a traveler can be more mobile, confident, and capable than before. This adds to safety and security, since travelers can go places that may have been previously inaccessible.

Flashlight

Get a cheap LED-powered flashlight from Walmart or Target and bring a couple spare batteries. Or, pick up a USB-rechargeable LED bike light (red or white). I opted for the latter, since I already had a bike light. It fits the bill perfectly for when you need a little extra light, and the rechargeable nature means that you don’t have to worry about losing batteries. If you take my advice regarding the ZaggSPARQ, you probably won’t have to worry about running out of power, either.

Why Is This Technology?

Advances in battery and illumination technology have made it possible to carry around a light source hundreds of times more reliable, efficient, and powerful than flashlights of the past. Keeping a light source on hand at all times means that you don’t have to be afraid of the dark, and can even be used as a tactical tool to momentarily blind or distract an aggressor.

Velcro Straps/Bungee Cords

A few velcro straps can help keep your stuff together, and pack so tiny that they’re essentially nonexistent. Plus, you can usually daisy chain them together since they’re velcro. Grab a few and throw them in your pack for those strange times when you need to find a way to strap three umbrellas together to shelter your group’s bags while it’s pouring outside and the line to get into the bathroom is too long.

Bungee cords fill the same purpose. My favorites are the kind used for tents, since they’re simple and have no hooks to catch on things that don’t need to be caught. Again, grab a couple, and daisy chain if necessary.

Why Is This Technology?

Rope is heavy, cumbersome, and requires knowledge of knots to fit various situations. Velcro is easy to use and requires about one second to learn to use. People used to use rope because it was the only effective way to tie things together. With the development of elastic, even a short bungee cord could become much longer and more useful, so keeping these on hand will allow you to be more adaptable to whatever situations you may encounter.

Scarves/Bandanas

I was turned on to the idea of a Shemagh or Keffiyeh recently by a friend, and I’m now wondering why these wonderful things weren’t a part of my life before.

A shemagh or keffiyeh is incredibly simple—just a large piece of cloth—but incredibly versatile. In hot climates, they can be wet down to provide long-term cooling and heat dissipation, or can be wrapped around the head to provide shelter from the sun. They can be worn around the neck or head to provide relief from insects, and even fashioned to protect the eyes while still providing visibility in the case of blowing sand. In cold weather, the obvious use for warmth also belies the ability to use the cloth as a shield for the eyes from blowing snow similar to blowing sand. Due to their large nature, they can also be used to tie things together (if you run out of velcro or bungee cords, naturally).

Bandanas serve a similar purpose, but, due to their smaller size, are more limited in their application.

The one issue with the shemagh/keffiyeh is its cultural acceptance. While many people in the world view the shemagh/keffiyeh as an accepted part of everyday life, there are still many people who associate this simple piece of cloth with a specific ideology or mindset. If you feel that you may be treated differently or unfairly because you’re wearing one, take the obvious course of action, and simply take it off. You can also make your own out of simple, non-patterned fabric by cutting large squares (usually 40-45 inches per side) out of fabric you’ve purchased yourself.

Why Is This Technology?

The use of a simple staple of life to fit multiple situations is a technology in itself. Learning to use something to make life easier is what technology is all about.

Document Sleeves

I tend to have a difficult time keeping papers in readable condition, so I always carry a set of plastic document sleeves with me to protect any sort of paper documents I may have. These are simple, and can be purchased from any number of office supply stores. I favor the kind of sleeves that are open on two sides, which allow me to get paper in and out quickly without having to worry about closing mechanisms that can rip or tear, compromising the structure and usefulness of the sleeve.

Why Is This Technology?

Folders have been around for as long as I can remember, and probably a great deal longer than that. I hate folders, simply because they have to be opened in order to be useful, and tend to tear fairly easily when twisted or stressed. My plastic sleeves are simple, have withstood years of my abuse (although I try to keep them as safe as I can), and keep all of my important documents together so I can travel more effectively.

Pens

Everyone and their mother will have an opinion about pens, but my favorites are the Zebra Telescopic pens and Parker Jotter, mostly because they feel great and write well. The Jotter is made of stainless steel, and I’ve used it to punch holes in leather, fish out keys from drains, and also, believe it or not, write a little. I tend not to go for the super-special space pens and write-anywhere pens because I don’t ever find myself writing upside down or underwater. That being said, if having a pen that writes while wet is important to you, go with the Inka, since it’s almost indestructible, refillable, and made in the USA.

Why Is This Technology?

Writing is arguably one of the most important inventions of all time, but writing implements have largely stagnated over time since, well, there’s not much more we do there. There are companies that are innovating constantly, however, like Livescribe. While pens like the Pulse or Echo are fantastic in classroom and meeting situations, most people don’t need them while traveling. The pens I’ve mentioned are more durable and more finely crafted than your run-of-the-mill ballpoint pen, and represent an intersection between research, quality materials, and good design.

The Best For Last

Sharpen your mind. It seems strange to bring this up at the end of a long article about stuff, but your mind will always be your best tool. When you travel, you will get tired, you will get hungry, and you will experience uncomfortable levels of heat, cold, and moisture (too little or too much). You will be surrounded by people you don’t know, who don’t know you, and who may even feel threatened by you. You may travel to countries that have a disdain for Americans, or see Americans as cash pots that they can take from.

All of these reasons compound the importance of having a sharp mind at all times. I’ve found that martial arts training, meditation, and yoga are all good ways to hone your awareness and center yourself in the moment. If you’re used to a life of comfort and trust, then find your nearest big city and spend time there as practice. People with questionable morals already take advantage of those they view as targets, and, when traveling, you’ll be identified as a target from halfway across the country. Making yourself more of a target by drinking in excess, taking drugs, and making poor judgment calls are all out of the question.

Also, understand that anything classified as “bad” at home will be classified as “absolutely horrible” when you’re in a foreign country. By that, I mean things like hospitals and jails. If you go out, do something illegal, and land yourself in jail, that’s it. The folks from the American Embassy might stop by to wave hello, but that’s about all they’ll be able to do. Traveling to a foreign country is the big leagues, folks, so remember that before you start buying cheap drugs or getting shlooshed at the nearest watering hole.

Why Is This Technology?

Martial arts, yoga, and meditation have been developed and practiced for thousands of years, but animal nature has not changed very much. The lessons you can learn through self-defense classes may not be able to win you a UFC tournament, but they’ll keep your mind focused on what’s important, while sifting out what’s not. Learning how to move comfortably, carry yourself well, and quickly ascertain peoples’ motives are very important tools that people have developed over centuries. Unfortunately, you can’t buy common sense or street smarts, but you can “update your firmware”, so to speak, by learning these skills.

Just like a firmware update, however, you can’t stop halfway through. Don’t think that a week or even a few months of training are enough to get you in the right mindset. Real training takes years. If you’re going to be a committed and regular traveler, consider joining a martial arts class before you start globetrotting.

Conclusion

As with any article you read regarding travel, it’s important to understand that not everything I’ve experienced will fit your situation. I use my resources in ways that other people wouldn’t consider, and other people would use the very same resources differently. There are also lots of things I left out here, things like clothing, hygiene, and laundry. I think there’s plenty out there that you can find on your own, though.

When choosing your gear, make sure that you’ve got what’s right for you. It’s easy to read just about any travel article and think, “I’m gonna do the same thing!” and then run out and buy a whole bunch of new stuff. I don’t recommend buying new stuff for travel, since you’ll probably use it for a short while and then consign it to the rubbish heap. I do, however, recommend getting new stuff if you know you’ll use it after you’re done traveling, since it will both have more character, and keep you in a lightweight, low-impact mindset.

Ultimately, you’ll have to keep in mind that things won’t work the same while traveling. Your typical routine will be disrupted (sometimes significantly), and you’ll just have to adapt to it. Things that were easy to do here may take many more steps while you’re in another country, and things that were difficult in your native country may suddenly seem easy for no apparent reason. Try to strip away all your creature comforts for a few days to see how you might function without easy access to power, internet, and transportation. If you can do well without these things, then slowly add your typical host of gadgets back into your daily life to find a balance of mobility and presence in the moment.

A Novel Approach

Posted: May 29, 2011 Filed under: explanations, life integration, news | Tags: apple, asus, atrix, foleo, ios, ipad, iphone, motorola, padphone, sync, syncing, tablet Leave a commentSo there have been a lot of approaches to this whole smartphone/tablet combo, and I struggle to see how any of them are truly good approaches to something that really isn’t a problem to begin with, and, truth be told, some of them seem actually harmful to the future of the PC that we’re currently headed toward.

The recent release of the Asus Padfone thing is just insane, like a tablet “turducken.”

For some reason, tablet manufacturers keep insisting that the tablet experience is hamstrung on its own, and continuously mandate the use of some sort of phone in order to complete the experience, or even use the device at all. Before anyone jumps on me for that sentence, I know that two of those examples aren’t even tablets, but take that in the spirit of the statement.

Companies designing these personal, productivity-driven devices that are reliant on smartphones are saying several things simultaneously. “You can do more!”, “You don’t have to manage the data on two devices separately!”, “You have more flexibility!” etc. What is really happening, however, is the cheapening of these devices and damage to the overall industry. Let’s take the Palm Foleo, the first of its kind and arguably the predecessor to the netbook. This device was “revealed” in an era when people got their data connections by tethering their devices to bluetooth-capable phones, so it made sense for the Foleo to then suck data out of its tethered Treo. Kudos to Palm for attempting to creating a great ecosystem, too. I applaud that. I think it was too revolutionary at the time, however, which led to its ultimate failure. (Side note: At the time, I was using a Nokia N800 paired with a Sony-Ericsson K790a (James Bond, FTW!). I loved both of these devices, but I kept thinking “I’d like to be able to use this tablet if I ever forget my phone,” and “I wish this phone was more capable at general ‘computing’ tasks so I can still use it if I ever forget my tablet.” Then I got an iPhone. At no point, however, did I think that the phone should be my gateway to the Internet for another device. It stood on its own and was perfectly functional.).

Currently, however, having this sort of dependence tells the consumer that

- Their device is not capable of real work (which is a lie).

- Their larger laptop/tablet is no more than a large phone (which is also a lie).

- The two devices are explicitly codependent.

This is really bad! It further solidifies the view that phones are “just” phones, and that tablets are “just” big phones. I have taken notes, written papers, and read books on my iPhone. The fact of the matter is that this device is powerful and capable of producing real work that I have gotten graded, real research that I have used to write papers and blog posts, and real communication with people oceans away. The reason that I have an iPad and an iPhone is because I want two separate devices, not some crazy Frankenstein monster of a device. There are times that I need to work on just one device, and, let’s face it, sometimes we just forget one at home. The key isn’t creating a physical bridge between the two that mandates the existence of one in order for the other to be used, it’s creating an invisible backbone that allows these devices to share information invisibly, so that the user can put one down,pick the other up, and resume working exactly where he or she left off. There have been hopes of iOS “state” cloud syncing for a little while, and this truly where this needs to go.

We don’t need devices that are tethered together using wires and plugs, we need devices and services that are smart enough to get out of the way and let our intention take center stage.

Update: Corrected spelling of “Padfone.”

Use the Force, Paul, or: How I Beat the AT&T Death Star

Posted: March 25, 2011 Filed under: explanations, innovations, life integration, me, tech | Tags: apple, AT&T, data, data-only, google voice, GSM, ipad, iphone, SIM, swap, web portal Leave a comment In case you haven’t noticed, the 21st century is upon us. Aside from my flying car, there are a few other neat Jetson gadgets that I’m looking for that I have, in a way, already found.

In case you haven’t noticed, the 21st century is upon us. Aside from my flying car, there are a few other neat Jetson gadgets that I’m looking for that I have, in a way, already found.

One of the other things that I’m looking for is freedom from this ridiculous carrier-centric phone world. There should be no reason that an iPhone (or any other phone, for that matter) cannot be used on other networks (barring technological incompatibilities between technologies like GSM and CDMA). There should also be no reason for carriers to charge me an exorbitant amount of money for “minutes” that I do not use. Before reaching through the void into the world of sweet, sweet data, my monthly phone bill was around $175.00 for two lines, unlimited messaging, 700 voice minutes, and unlimited data. I had almost 4,000 rollover minutes accrued since I re-upped my plan last July (when I got the iPhone 4).

Four. Thousand.

Clearly, the majority of my monthly bill (about $80.00) was being put towards minutes that I was very rarely using. Some months would see both phones using less than 100 minutes combined. I was paying for more minutes than I would ever want to use, but there was no way for me to get a data-only plan on my phone unless I a) could prove that I was hearing-impaired, or b) devised some way to get a data-only SIM card and somehow provision my phone to take advantage of that.

I went with option b.

What I noticed when I first started playing with my first iPad was that the SIM card in both the iPad and iPhone 4 are of the “Micro-SIM” variety, which means they’re just a fraction of the size of a normal SIM card. Surely there had to be a way to use the iPad SIM card in the iPhone, right?

Sadly, a quick swap of the SIM cards yielded no results for the iPhone, and while the iPad could receive data, it couldn’t make any calls. Not that I’d want to hold that up to my head to talk, anyway. I gave up on the idea of a cheap pocket web portal and decided that I’d just start sterilizing my arm for removal.

Fast forward almost a year, over a thousand dollars in payments to the Empire, and I’m fed up. I don’t need this. Time to bust out my Jedi skills on this Death Star.

The key player in all of this is a powerful and evolving service that Google offers called Google Voice. For those familiar with the service, Google voice can be leveraged to free your number from your carrier and place it “in the cloud,” allowing you to open up a new line of service with any carrier, but with a little extra weight behind your bargaining because you don’t have to purchase a heavily subsidized phone. Plans can be purchased on a month-to-month basis instead of on a contractual basis. Negotiating with those carriers can be tough, though, so you’ll have to brush up on your Jedi Mind Tricks.

Porting Your Number

The first thing you’ll need to do is port your number over to Google Voice. For true freedom, this is really the only way to go. When I had separate Google Voice and AT&T phone numbers, people were simply confused when I would contact them from one or the other. They’d constantly be asking me which number was my “real” number, or why I keep changing phones. For my friends, it didn’t matter that much. For my family, it was confusing. I’ve always been on the cutting edge of technological trends, and trying to explain this cutting-edge VOIP service was difficult, especially since my parents have had the same phone plans for the better part of a decade. Porting is easy, but there are a few things you need to know. Here’s what it boils down to:

- Porting your number to Google Voice will cancel your current phone line with your carrier. This is effective almost immediately, despite taking a while for the transition to complete on the back end.

- Google charges you $20.00 for porting your number.

- If you are still under contract with your carrier, you are on the hook for the ETF. This is can be pretty high, depending on how much time you have left before your contract is up.

- Text messages will take several days to route properly. If, like me, you sometimes suffer from communication overload, this will be a blessing for you. When people ask you if you got their message, you can legitimately say, “Nope, I was porting my number over to another carrier.” Done deal.

- You cannot make outgoing calls using Google Voice. Technically. You can, however, use Google Voice to approximate the normal “phone” experience really well. I’ll go into that soon.

- Google Voice software for the iPhone leaves a lot to be desired. It works, it’ll get you where you need to go, but none of it is perfect. I’m sure Google will get around to updating its iPhone app eventually, but it needs a lot of work right now. Just a heads-up.

Setting Up Seamless Calling

This is tricky. I’m not going to lie, I was extremely frustrated with my calls until I explored my options a bit. You can benefit from my experimentation here.

Google Voice isn’t a phone. Instead, Google Voice connects phone numbers together. For the tinfoil hat crowd out there, this might be a dealbreaker. Google is going to have your voice passing through their servers, period. There’s no way to do this without having Google act as the middleman. I don’t care about this, because I figure they’ve got enough data on me already. If you’re already here, though, you probably don’t care too much about that.

Because Google Voice doesn’t actually make any calls, you have to find a reliable way to receive calls on your phone without actually paying for minutes. I found the solution in a couple places. Skype and TextFree are all services with various degrees of free and paid options that provide VOIP service. Of those two, I’d say that TextFree is definitely, unequivocally, the best option I tested. The basic process for both, however, is the same. With Skype, you’re going to need two paid plans to properly route calls. One plan to allow unlimited incoming and outgoing calls, another to give you an “online number” that people can call. The combined cost of these two services is around $60.00. Not bad, especially considering that this gives you a year of unlimited calling to US-based numbers. You then need to add your newly-purchased Skype number to your Google Voice settings. Under normal circumstances, Google Voice would then call you, ask you to enter the code it displays on the screen, and you’d be all set. This is where it starts to break down,

I will say this as plainly and clearly as I can: Skype’s app is horrible. When I say horrible, I mean absolutely awful. I don’t know if they gave the coding and design over to a bunch of blind, epileptic monkeys or if they’re really just that bad. At this point, if they told me the monkey story, I’d say it makes sense. The fact that this software got out the door under human watch, however, is not good. There are so many failings, but here’s the biggest one: the Skype app doesn’t use Apple’s standard push notifications, it uses some sort of bastardization of local notifications. The end result is that 9/10 attempts to contact you will be lost to voicemail, and 9/10 attempts to contact someone else will result in that person being greeted by dead air. I could really go on and on, but it’s best you read my review on iTunes. It’s scathing.